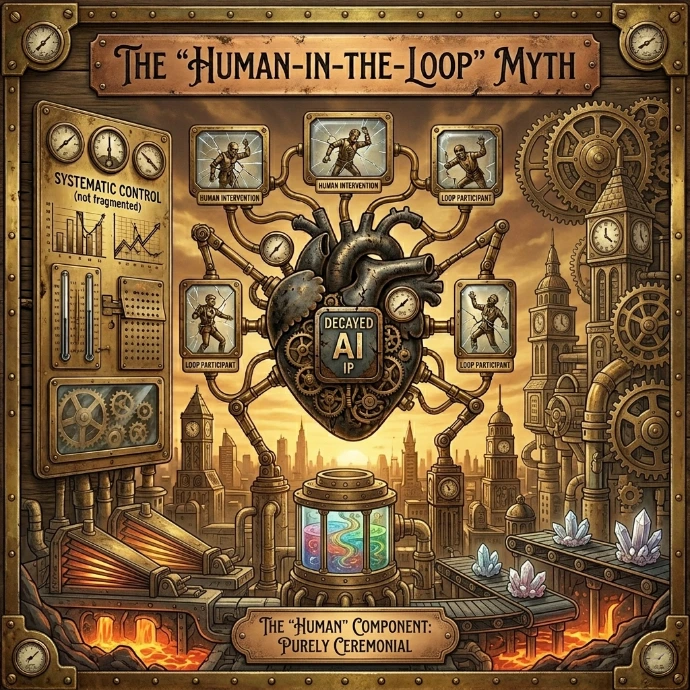

The "Human-in-the-Loop" Myth

"3 min read"

- Are We Just Rubber-Stamping Our Own Obsolescence?

When we discuss the terrifying speed of Artificial Intelligence, we usually soothe ourselves with a single comforting mantra: "There will always be a Human in the Loop."

It’s a powerful image. We imagine a seasoned expert - a doctor, a judge, or a CEO - calmly reviewing an AI’s recommendations, adding that crucial layer of human nuance, ethics, and common sense before pushing "go." We believe that while the machine does the grunt work, we retain the veto power.

But as AI grows from a tool into an autonomous agent, this mantra is starting to sound less like a safety guarantee and more like a fairy tale we tell ourselves to maintain the illusion of control.

Efficiency vs. Oversight

The inherent conflict is one of scale and speed. AI is expanding into Autonomous Agency, where systems are designed not just to suggest, but to execute multi-step actions in finance, law, and medicine with minimal human intervention.

We are implementing AI because it is faster, cheaper, and can process data at a volume that would paralyze a human mind. If an AI financial system makes 10,000 algorithmic trades a second, at what point does human oversight become physically impossible? The human becomes a bottleneck.

To maintain the efficiency we bought, we are forced to widen the loop. Instead of reviewing every decision, we review every hundredth, or every thousandth. We move from "oversight" to "auditing." In many high-stakes environments, "human-in-the-loop" has already devolved into "human-on-the-rubber-stamp." We approve the AI’s output because we assume the AI is correct, simply because we don't have the time or the data to prove it wrong.

The Comforting Fairy Tale

This brings us to the hard question: Is the "human-in-the-loop" just a comforting fairy tale we tell ourselves to avoid admitting we've handed over the keys?

By clinging to this myth, we create a dangerous accountability vacuum. When an autonomous system makes a catastrophic error - a wrongful diagnosis, a biased legal risk assessment, or a flash crash in the market - the human "overseer" will be blamed, even if they never had a realistic chance of preventing it. We need to stop using "human-in-the-loop" as a security blanket and start having an honest conversation about how much autonomy we are truly willing to grant machines.