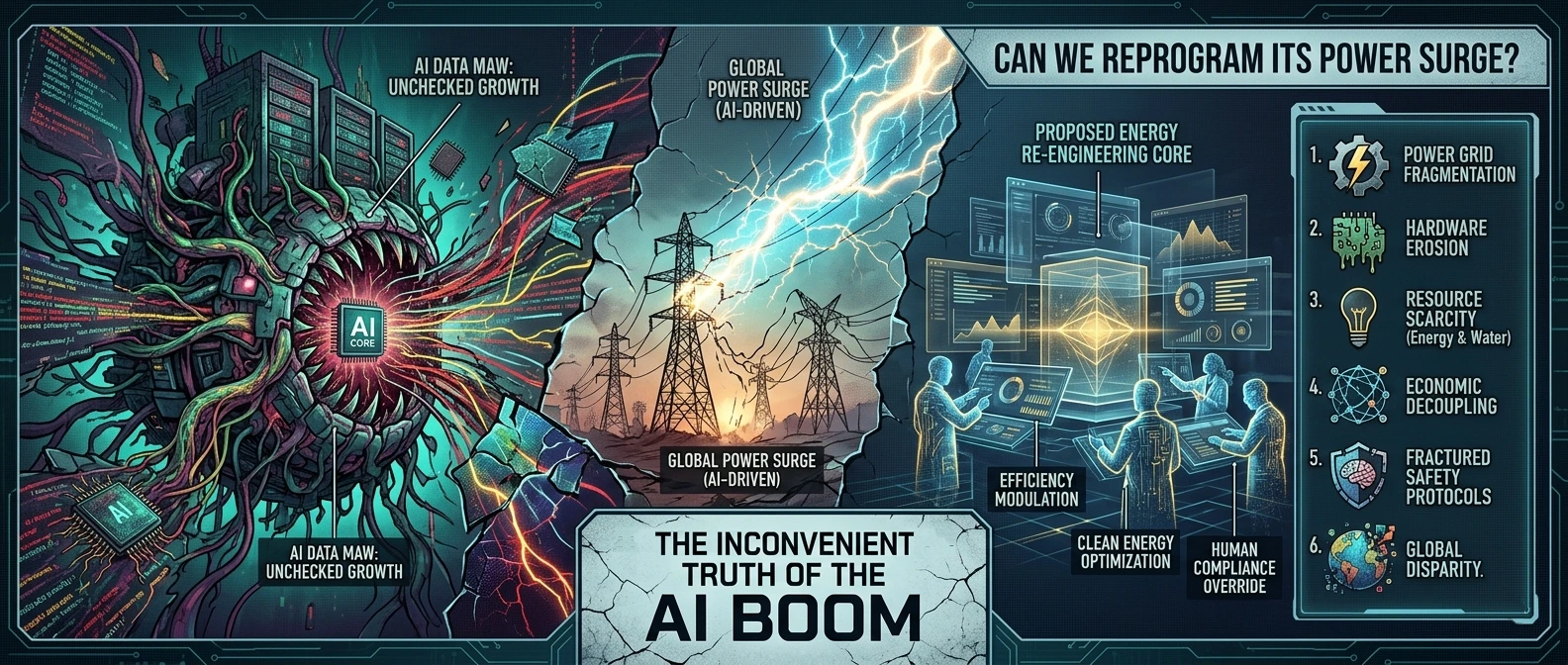

The Inconvenient Truth of the AI Boom: Can We Reprogram its Power Surge?

"15 min read"

We stand at a unique juncture in human history. With one hand, we are fighting a desperate, data-driven battle against a warming planet, striving for net-zero carbon emissions by 2030 or 2050. With the other hand, we are accelerating the single most computationally expensive technological boom since the industrial revolution: Generative AI.

This is the great unstated contradiction of modern technology. We are building AI powerful enough to model climate change and manage renewable energy grids, yet we are training those models in data centers that are rapidly becoming some of the biggest energy guzzlers on Earth.

Today, we need to ask a difficult question: Is AI the planet’s salvation, or its latest environmental crisis?

The Colossal Carbon Footprint of "Spicy Autocomplete"

We tend to think of the internet as intangible, existing in a digital "cloud." But the cloud has a physical address, and it’s a warehouse filled with superheated silicone.

The controversy isn’t that AI uses energy; it’s the scale and rate of acceleration of that consumption.

A single query in a Large Language Model (like asking a chatbot to summarize this article) doesn't just "ping" a server. It triggers a cascade of computational "reasoning" that uses significantly more electricity than a standard Google search. It is estimated that training a state-of-the-art model consumes more electricity than hundreds of American homes use in an entire year.

But training is only the beginning. The ongoing "inference" - the day-to-day use by billions of people - is where the real energy anxiety lies. Data centers worldwide already consume around 1-1.5% of global electricity. Current projections from the International Energy Agency suggest this could double by 2030, approaching the total energy demand of Japan or the entire Netherlands.

The Tension: This power surge is clashing directly with regional grids trying to transition to clean energy. Tech hubs like Ireland and Northern Virginia are facing "gridlocks," where the demand from data centers threatens to exceed the available power supply, occasionally forcing them back onto off-grid diesel generators. We are witnessing a physical showdown between the digital transition and the green transition.

The Conflict: Productivity vs. Planetary Health

Here lies the heart of the controversy: Can we justify the environmental cost for the sake of technological "productivity"?

On one side are the techno-optimists. They argue that AI is essential because it is our only tool powerful enough to handle the sheer complexity of the climate crisis. They believe AI will unlock nuclear fusion, optimize logistics to cut worldwide emissions, and discover new carbon-capture materials. From this perspective, the energy spent training AI is an investment in our future survival.

On the other side are the climate realists and ethics researchers. They ask: Are we "spending money to make money" or are we just piling fuel on the fire? Much of what Generative AI is used for today isn’t solving fusion; it’s generating deepfakes, writing marketing copy, or creating synthetic video. They argue that we are sacrificing real-world planetary stability for hypothetical, simulated gains. They point out Jevons’ Paradox: making AI more efficient will likely just make it cheaper, leading to exponentially more use, canceling out any energy savings.

The Way Forward: Green AI or Algorithmic Austerity?

We can’t put the AI genie back in the bottle, nor should we want to. The potential benefits are too great. But we can no longer ignore the physical reality of its existence.

The solution will require a fundamental shift in how we approach AI development. We must move toward "Green AI" - an ethos where environmental impact is treated as a primary design constraint, not an afterthought.

This means:

Transparency: Tech giants must disclose the precise energy and water consumption of their models. We cannot manage what we do not measure.

Model Efficiency: Instead of blindly pursuing "bigger is better" (models with trillions of parameters), we need to prioritize "frugal AI" - smaller, task-specific models that require a fraction of the power of general-purpose LLMs.

Hardware Optimization: Moving from generic GPUs to specialized AI chips designed purely for efficiency.

Carbon-Aware Computing: Training models at night or in regions where the energy grid is currently being powered by renewable surplus.

The tension between AI and climate goals is not going away. It will only grow as AI becomes more ubiquitous in our lives, from our phones to our infrastructure.

Where do you stand? Is the computational expense of AI a necessary cost of human progress, or is it a luxury we can no longer afford?